Language Machines 1

I am slowly making my way through Leif Weatherby's pithy book Language Machines. I bought myself a hard copy after reading different references in my readings about AI and LLMs. It's a dense landscape, and the book is equally dense - and I am too - so a triply dense cake! Using AI for a book summary...

Language Machines by Leif Weatherby argues AI language models do not simulate human cognition but rather create culture. It proposes that to understand generative AI, we must shift our perspective to one that views these models as "culture machines" and "large literary machines". It attempts to move the AI discourse away from science fiction tropes and the "apocalypse vs. optimization" debate, instead offering a robust theoretical framework from the humanities to understand AI as a powerful, socially embedded cultural force.

LLMs underscore that language is itself a meaning-making medium with an innate poetic structure. It is an invisible poetic that acts as a dynamic heat map, a system that generates coherence without needing to be anchored in a human interior. AI is a cultural technology in the way it weaves those threads... it is a valuable read, and is also slow going. Since I had a pencil handy for underlining and making marginal notes, I also made little sketches, and that started this series of drawings...

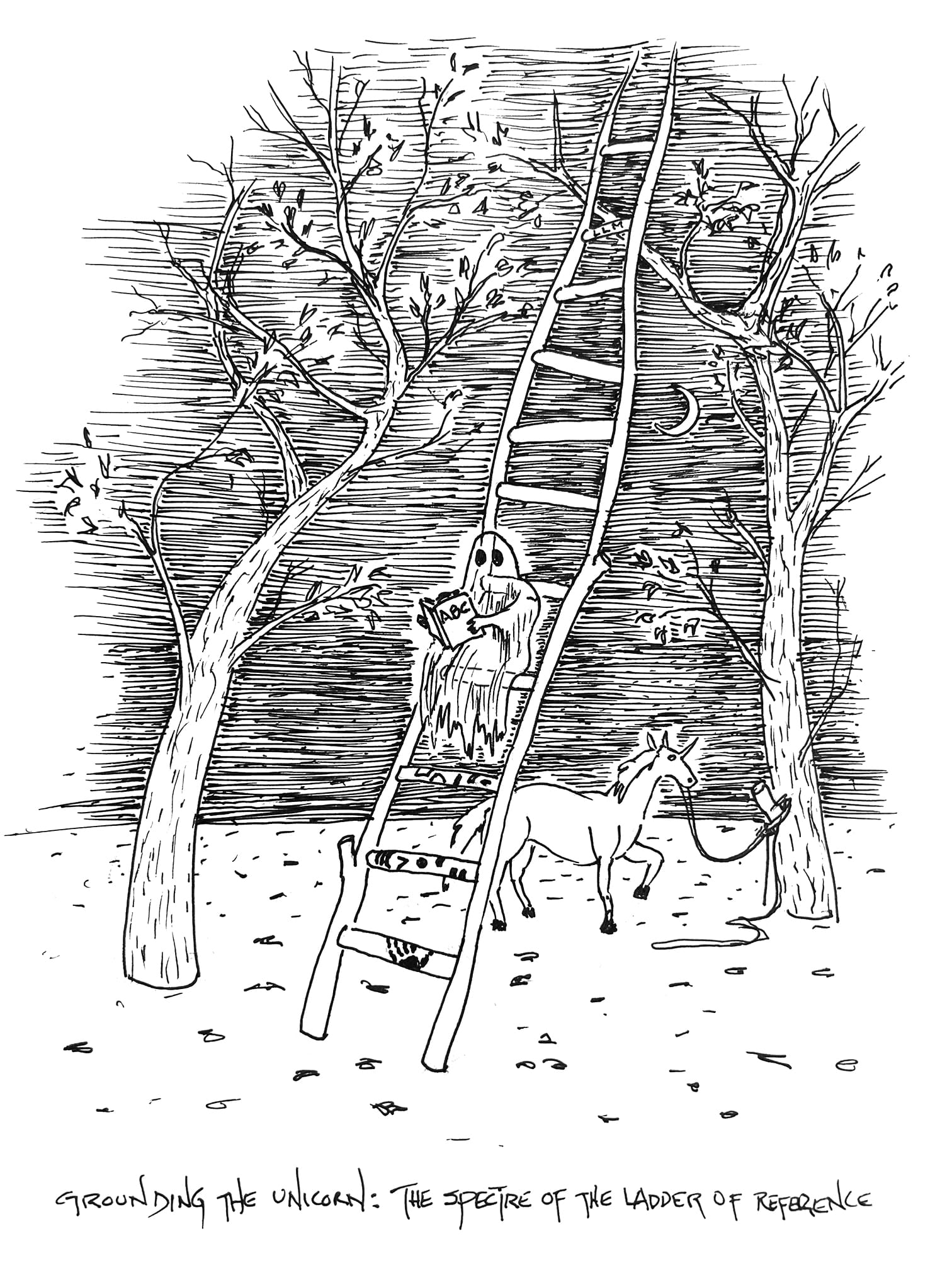

The Ladder of Reference is a concept in philosophy of language and semiotics about how words connect to reality through chains of meaning. Words don't directly touch reality—they build like rungs on a ladder from the ground up. Each rung references the one below, creating a ladder from concrete reality up to abstract language.

Weatherby asks...

- What about unicorns? Things that never existed at the bottom rung?

- What about AI-generated images? Where's their bottom rung?

- What about words generated by LLMs from statistical patterns?

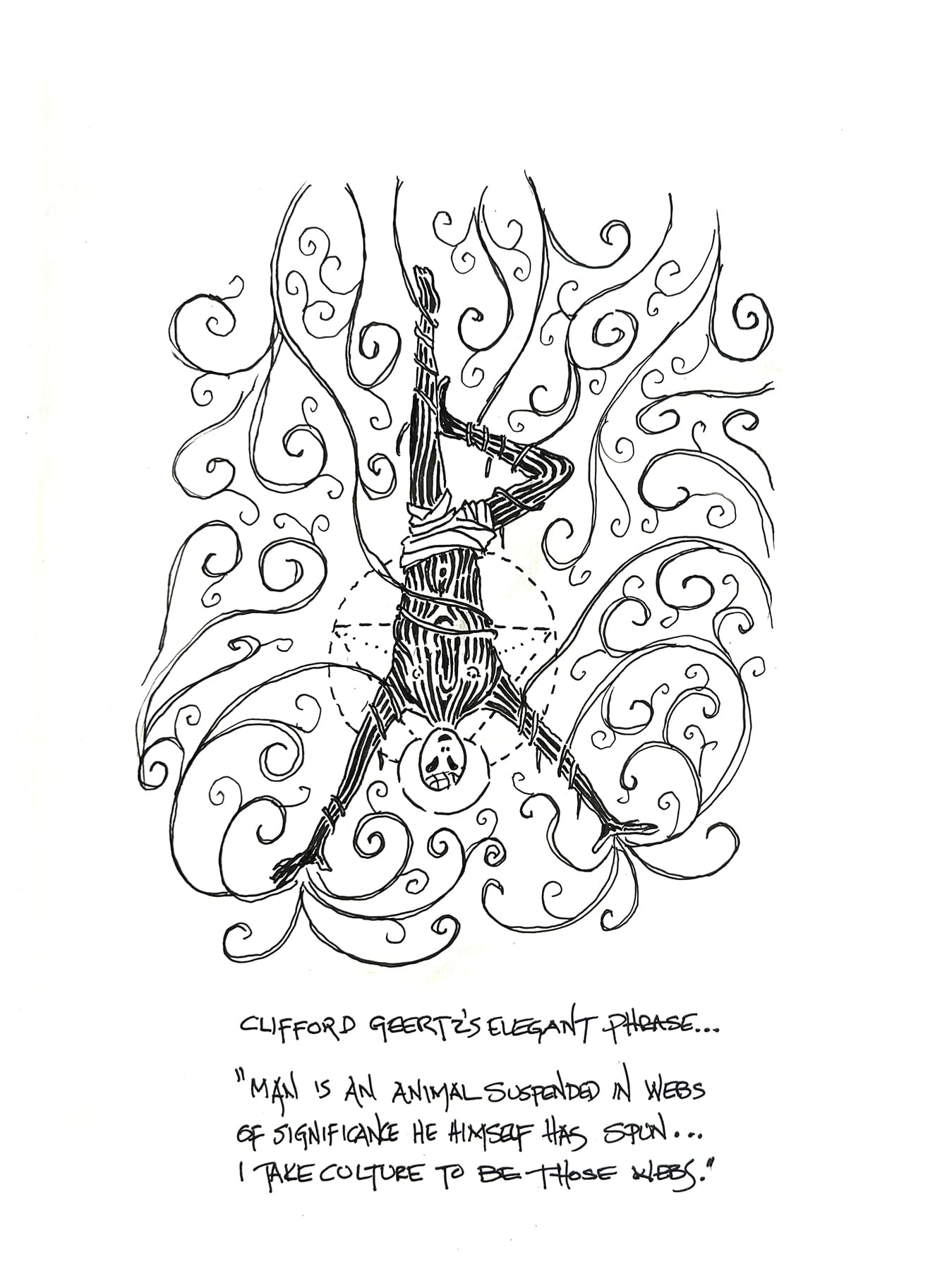

The phrase “man is an animal suspended in webs of significance he himself has spun” comes from Clifford Geertz, who argued that culture consists of shared symbolic systems—language, rituals, stories—within which humans live and make meaning. Meaning is not simply discovered in the world but produced inside these webs. This insight connects directly to Language Machines, where large language models expose how much meaning already circulates within language itself, without direct reference to external reality.

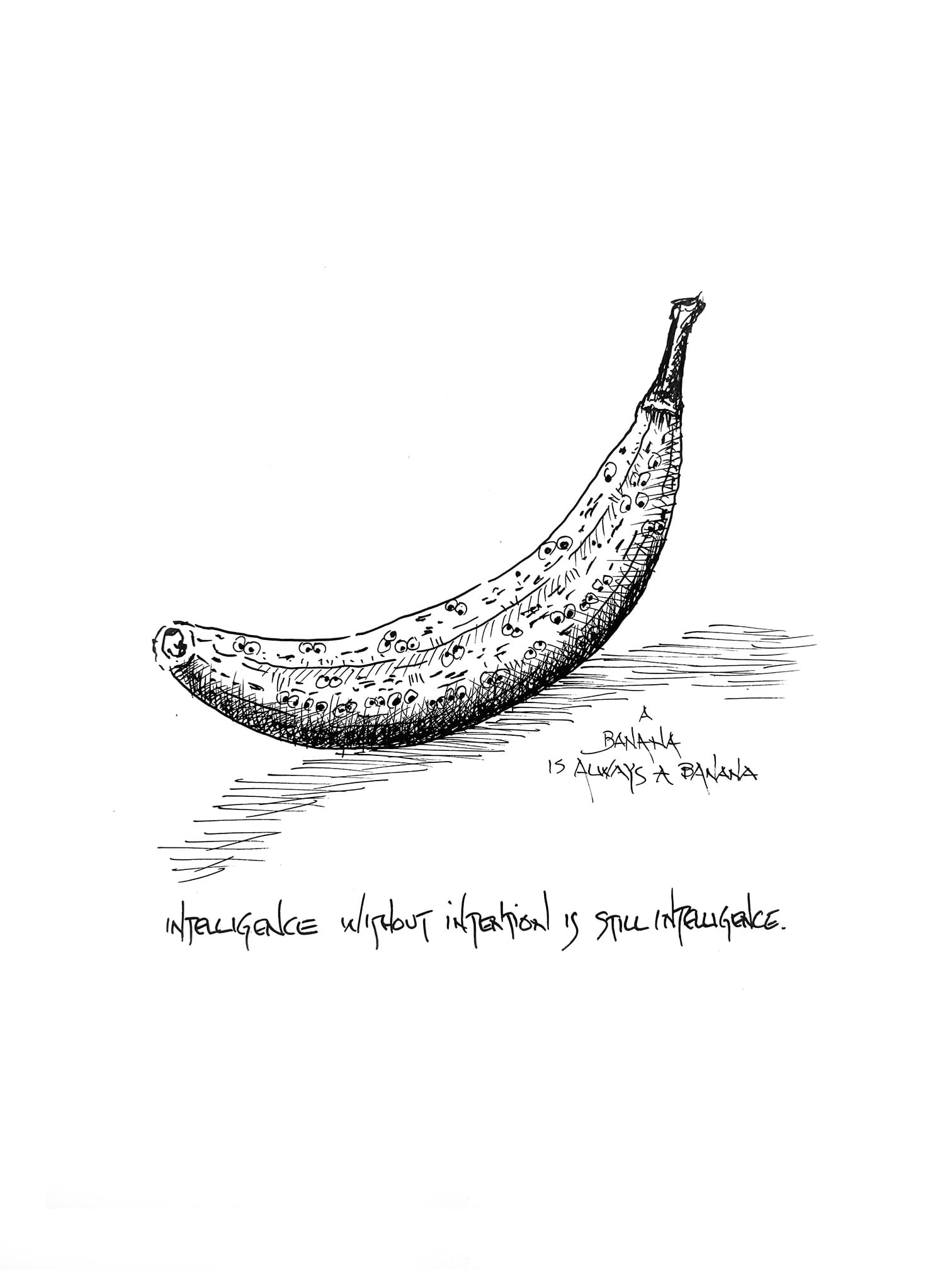

The idea that intelligence without intention is still intelligence challenges the belief that thinking requires consciousness or desire. In Language Machines, large language models demonstrate this by producing coherent and meaningful language without understanding or intent. Their intelligence arises from patterned responsiveness within linguistic systems, revealing that much of what we call intelligence depends on structure and shared culture rather than inner experience.

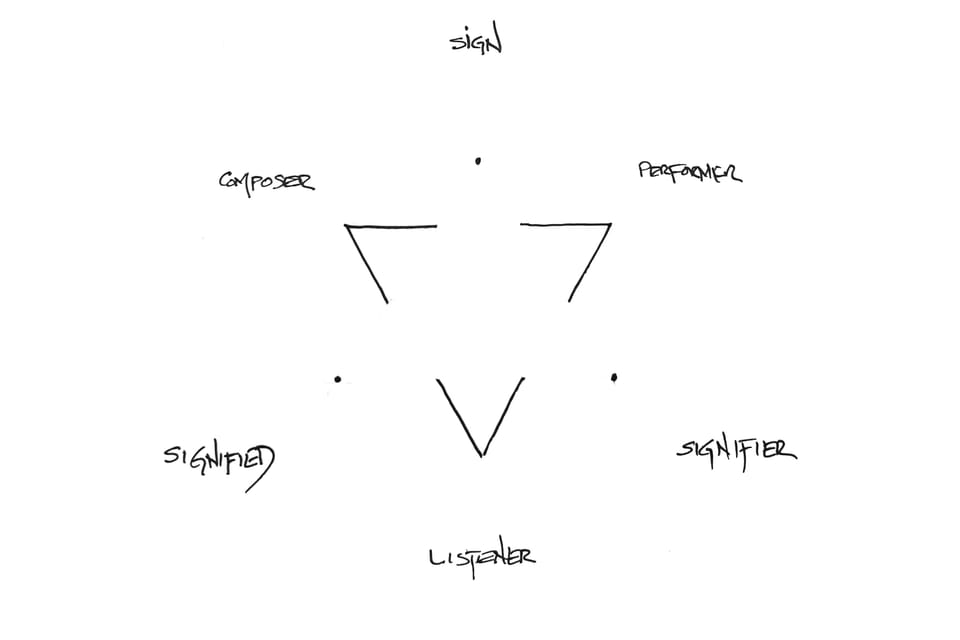

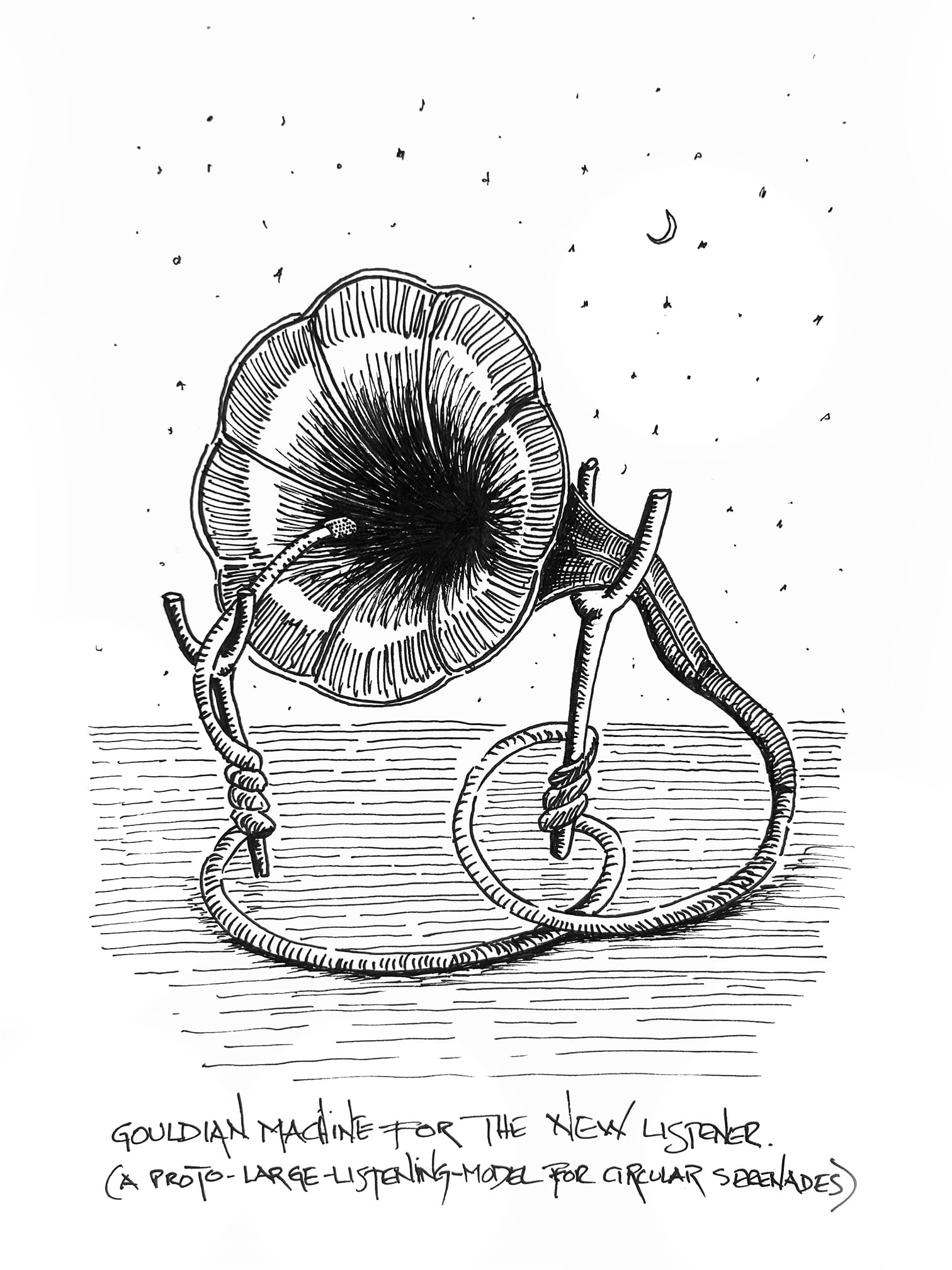

The connection between Glenn Gould’s New Listener and language machines lies in their shared removal of the human from the center of meaning. Gould treated listening as a constructed process shaped by recording and arrangement, not live intention. Language machines echo this by showing how coherence can emerge from language responding to itself, without consciousness, placing humans equally inside a larger circuit of signs rather than originators.